Cybernetics in the 3rd Millennium (C3M) -- Copyright 2006 by Alan B. Scrivener -- www.well.com/~abs -- abs@well.com

Volume 5 Number 1, Jan. 2006

(If you haven't read parts one and two, see the archives, listed at the end.)

WHY I WANT TO OWN A MOVIE STUDIO

There's a broken heart for every bulb on Broadway.

-- show-biz expression

I'm the only son of a bitch in this studio.

-- Walt Disney

After my last issue, I got some questions about my statement that:

it seemed like everyone in show-biz lost out at some point or another, except the studios, which tightened their winches of control slowly. I realized there was only one job in the movie industry I wanted any more: "studio owner."

What I meant was that everyone was exploited but the owners, so I must become an owner to avoid being exploited. It is not my goal to exploit the talents of others, but to enter into mutually beneficial contracts. (Still, I may end up exploiting people anyway -- that may be the only way the system works.)

An object lesson in the importance of studio ownership can been seen in recent events involving Steven Spielberg. He used to be an owner of DreamWorks SKG (the S, in fact), but he and his partners sold it to Paramount in December 2005. Now he's just a director, and he's mad at Universal for promoting "Cinderella Man" over his "Munich" as an Oscar contender. If HE gets kicked around, what hope does anyone else have of getting any respect?

I got a nice email from another programmer type who confirmed some of my conclusions about the F/X biz:

A colleague forwarded me the Sept. and Nov. issues of your newsletter and I found it a very interesting read. I came to similar conclusions about the gaming industry after interviewing at a few companies in Salt Lake. (I could probably still get a job at MS games or in the DirectX group in Redmond if I cared to move to Seattle, which I don't.) I also found your observations about the F/X industry in Hollywood to be spot on the money. I have some colleagues who made their way into that industry, but the culture of it always turned me off -- I'm just too much of an engineer and not enough of a sycophant for it to work for me. I think I came to that realization at SIGGRAPH 1992 in Chicago when everyone was abuzz about T2 and its implied change for the effects industry. Suddenly CGI was what everyone wanted because they could paint out the wires on the stunt in post and didn't have to try to hide the wires anymore. SIGGRAPH 92 was awash in Hollywood types who were ready to instantly dismiss the most talented and capable engineers (and CGI geniuses) simply because they had never heard of them, never mind that they were so new to CGI that they thought everything had been invented with T2.

I worked for E&S from 1989-1993, then Parametric Technology from 1993-1997 and Philips Digital Video Systems from 1999-2002. All of them did "graphics" and all of them eventually folded, shrank or are on a death spiral. Now "graphics" means Hollywood or gaming, and the latter is becoming more like the former every day, if they aren't already indistinguishable.

So now I work at a place called LANDesk which doesn't have anything to do with computer graphics, but unlike the graphics companies where I have worked, LANDesk is profitable and growing in a market that's barely been exploited on a worldwide basis.

I keep up my interest in cool graphics by supporting the demoscene, hosting the only demoparty in North America -- Pilgrimage.

I am also starting a computer graphics museum project here in Salt Lake City, beginning with the vintage hardware collections of myself and a few of my friends.

-- Richard

"The Direct3D Graphics Pipeline"

-- code samples, sample chapter, FAQ: <http://www.xmission.com/~legalize/book/>

Pilgrimage: Utah's annual demoparty <http://pilgrimage.scene.org>

It's always nice to have someone agree with me. His trajectory is also remarkably similar to mine, including volunteer activities to keep involved with 3D graphics.

THE VIEW FROM THE BOONIES

Well I walked outside into a sunny day

Called some friends to back my play

Went downtown to taste a few

Then over to the coast

To see what kind of trouble we could get into

What trouble we could get into ...

In sweet home San Diego

-- Sprung Monkey "Get 'Em Outta Here" (song, 1998)

on the CD "Mr. Funny Face"

So in 1998 I moved my family back to San Diego. We bought a house in Santee and put our daughter in the cool new school. My work in aerospace engineering, and later e-Commerce tools, paid the bills. I began to readjust to life south of Camp Pendleton.

We made the trek back up to LA in the summer for the 1998 SIGGRAPH conference. A few days before the conference Silicon Graphics, Inc. announced they were changing their corporate identity to just the letters "sgi" in an attempt to get more commercial accounts. Then, on the first day of the show they announced the layoff of 30% of their work force. My friend Mike P. ran up to me and said, "Have you heard they're reducing SGI by one third? They're taking out the G."

For a while I toyed with a screenplay outline, about a time 20 years in the future when an out-of-work negative cutter and a few other analog-castoffs from digital Hollywood plot to use EMP to fry all the computers in the LA basin. A heroic FBI agent saves the day. Helicopter shots of the Mulholland Highway, high action, lots of inside jokes about analog vs. digital. Working title: "Digital Direct."

Later, I began taking notes for a science fiction short story, "CG Is a Heartbreaker," about a future volume-rendering revolution, and how all the people who worked hard to make it happen got left behind and had to flip burgers.

Maybe this was all just sour grapes, but on a positive note I continued to study at the problem of how to become a studio owner.

A CALIFORNIA OF THE MIND

so let's me and you go get a new tattoo

we can hop on the Harley and cruise

we can start at the pier and share a beer

head out to the desert I can feel it from here

ride all the way to where the lizards play

ridin' on and on and on

there's a million stars

it'll blow you away

and it's all so concrete blonde

-- Concrete Blonde, 1987

"Chew You Up and Spit You Out"

(an alternative version of "Still In Hollywood")

Moore's Law kept grinding away, and everything kept getting cheaper. It became possible for a tiny work of genius like "The Dancing Cow" (1999) directed by Taz Goldstein

to be made cheaply enough to be given away on the internet, complete with heartfelt CGI teardrop scene, and manage to lampoon the "wannabe" mentality even while demonstrating it.

But for every no-budget project in LA there were many more being made all over the place in non-Hollywood environments. The classic of viral marketing, "The Blair Witch Project" (movie 1999) directed by Daniel Myrick,

was filmed on location in Burkittsville, Maryland. Rumor has it they bought the digital video cameras at Frys Electronics, which has a very liberal return policy, and then returned them for a refund after the movie was shot. The "viral marketing" trick was to claim, on a low-cost web site, that the story was TRUE before ever revealing that the so-called "found videos" would be released in theaters.

I remember comedian Chris Rock, on some awards show, saying, "Can you believe that movie was made for $50,000? Where did the money go? Somebody's walking around with $49,000 in their pocket."

Critically acclaimed "Cold Mountain" (movie 2003) directed by Anthony Minghella

was shuffled off to Romania to save money.

The stylistically awesome "Sky Captain and the World of Tomorrow" (2004) directed by Kerry Conran

is an example of the ultimate "blue screen move" that could've been shot anywhere. An article on the Apple web site

explains:

Although "Sky Captain" looks like a budget-breaking film, producer Avnet says that in this case, looks are deceiving. The film is an enormous success on a financial level alone, meaning this would have cost $50 million more in any kind of traditional way of doing this movie. That's because we did so little, and made up so much. And Kerry got the large canvas he wanted.

Conran agrees, and hopes the methods pioneered on "Sky Captain" will help even indie filmmakers get their movies made. If you're shooting a romantic comedy in someone's home, you don't need this. But if your audience wants to see a world created, you're going to have to raise that bar and not spend half a billion dollars. Our biggest innovation was that we cost a fraction of what a conventional movie would cost to do the same sorts of things.

* * *

"The current tools are really quite sophisticated, pretty amazing. And, well, if I can do it, anyone can do it."

The current patron saint of shoestring-budget moviemakers must be Robert Rodriguez, whose 1996 book "Rebel Without a Crew: Or How a 23-Year-Old Filmmaker With $7,000 Became a Hollywood Player"

contains the "10 Minute Film School" as an appendix, and a film version of said "film school" is included on the DVD of his 1992 film made for $7,000 (!), "El Mariachi"

backed with the more recent, slick and expensive "Desperado" (movie, 1995).

Rodriguez leveraged his cheapie films into big-time directing deals, made some money, and got out of Hollywood. He built his own green-screen computer graphics studio in his home town of Austin, Texas, and there made the disturbing but brilliant "Sin City" (movie 2005) directed by Frank Miller and Robert Rodriguez.

A WIRED magazine review warns what you're in for,

but there is no doubt that Rodriguez has changed the rules:

Rodriguez is the first filmmaker to make studio-style movies out of his home office ... in Austin, Texas.

I wouldn't know about all of this if it weren't for my friend Wayne H., who badgered me incessantly in the summer of 2001, first to read Rodriguez's book, then to read "iMovie2 -- The Missing Manual -- The Book That Should Have Been in the Box" (book, 2001) by David Pogue,

and lastly to buy a Digital Video (DV) camcorder and begin shooting videos. (I even got a corporate client and got them to pay for all my equipment on my first project.) And all the while Wayne was assuring my I'm destined for greatness. What a pal.

From then until now I have forged the plan for producing what I call "Avocado Westerns," low-cost Westerns shot in California, as homage to the Italian "Spaghetti Westerns" of the 1960s.

I would love to reinstate the old Grossmont Studios, which I learned of from the San Diego Historical Society.

Using "How to Write a Movie in 21 Days" (book 1993) by Viki King

as my guide, I began to write a screenplay for a movie called "Frontera" about the smuggling of illegal aliens into California from Mexico.

Ms. King provided a humorous template for screenwriting, and suggested we type it into our word processors to get warmed up; so I did:

FADE IN:

INT. YOUR HOUSE - DAY

YOU are about to sit down at the typewriter. Suddenly you get

very nervous.

YOU

What is it supposed to look like on the

page? Do I put in all the camera angles? Do

I have to write everything everybody says?

INNER MOVIE

First time screenwriters are usually

terrified of screenform.

FADE OUT.

FADE IN:

INT. YOUR WORKSPACE - DAY

This is the stage directions.

CHARACTER NAME (V.O.)

Dialog should not extend beyond a line 2 1/2

inches from the right of the paper.

CHARACTER NAME

(Astonished) This is where you write the

dialog.

(MORE)

[page break]

...and so on.

Following this format I wrote part of a screenplay for "Frontera" and -- despite Bucky Fuller's caution never to show unfinished work -- I'm sharing it here:

But work on it halted after 9/11 when my consulting work went into high gear, working on visualizing bio-terrorism threats and related projects.

THROUGH A GLASS DARKLY

The mode of the TV image has nothing in common with film or photo, except that it offers a nonverbal gestalt or posture of forms. With TV, the viewer is the screen. He is bombarded with light impulses that James Joyce called the "Charge of the Light Brigade" that imbues his "soulskin with sobsconscious inklings." The TV image is visually low in data. The TV image is not a still shot. It is not a photo in any sense, but a ceaselessly forming contour of things limned by the scanning-finger. The resulting plastic contour appears by light through, not light on, and the image so formed has the quality of sculpture and icon, rather than that of picture.

-- Marshall McLuhan, 1964 "Understanding Media: the Extensions of Man"

McLuhan inspires me to take the long view; we are living through the slo-mo conversion from film to High-Def (HD) video, and I must ask, what are we trading off? Borrowing both his style and some of ideas, I offer this McLuhanesque table:

| film | video | |

|---|---|---|

| "hot" medium | "cool" medium | |

| edge detection | no edges, just colors | |

| fine grain | mosaic | |

| time sliced at 24 frames/second | time smeared at 30 frames/second | |

| mostly dark | always bright | |

| huge color palette (ask Disney animators) | NTSC = Never The Same Color (just compare any row of TVs) | |

| watch in dark (eyes more dilated) | watch with lights on (smaller field of view) | |

| reflected light ("the silver screen") | transmitted light ("you are the screen") | |

| rushes, dailies, pickups | videofeedback (enfolding, Klein worms) | |

| tearing ticket triggers a hormonal response | TV is the hearth, or a guest in the home | |

| effects done with blue screen | effects done with green screen | |

| higher in class | lower in class | |

Let me elaborate on these little sound-bites.

| "hot" medium | "cool" medium |

| edge detection | no edges, just colors |

| fine grain | mosaic |

McLuhan defined a "hot" medium as one that does a lot of work for you, while a "cool" medium requires you do a lot of work yourself. Headlines are hot, slang is cool. Marching music is hot, jazz is cool. And film, with its built in edge detection (due to the physics and chemistry of the exposure process on the film), is much hotter than the mosaic form of TV, which has no "edges" except the ones you draw yourself. The elements of film grain can influence their neighbors, while each "dot" in the video camera's retina works alone, reporting its Red-Green-Blue values in total spatial isolation.

| time sliced at 24 frames/second | time smeared at 30 frames/second |

Paradoxically, the shutter of the film camera slices time into discrete "ticks" that are temporally isolated -- even if focused bright sunlight were to burn away the film emulsion under the lens in one frame, a fresh frame of film comes into the camera in the next time slice to start anew. Video cameras, especially the old "trinitrons," are very sensitive to bright light (like a human eye with its after-images) and will smear brightness over time, leaving a trail.

If you were superfast, like "The Flash" in D.C. comics,

you would notice in a movie theater that the screen was pitch black most of the time, with brief flashes of brightness for each frame. The shutter is only open while the film is stationary; it is closed while the film advances through the projector. But when you watched television, you would see the entire screen is always bright, while the current scan-line is super-bright, and current dot being drawn is brightest of all; though this point of brightness moves by so fast we only see an average brightness. In both cases the optical illusion called "the persistence of vision" is filling in or smoothing out the visual glitches.

| huge color palette (ask Disney animators) | NTSC = Never The Same Color (just compare any row of TVs) |

I read in the classic hand-drawn animation text, "The Illusion of Life: Disney Animation" (1981) by Ollie Johnston and Frank Thomas,

that some fine artists actually became Disney animators just to use the color palette of projected animation, which has more different visible colors than any other visual medium.

The NTSC standard for analog TV color, by comparison, is so messed up that TVs for years needed "hue" knobs so you could fix the flesh tones to be pink.

And a visit to any TV store -- or even a sports bar -- will show you that colors vary from TV to TV. This problem will get better with HiDef and digital, but it won't go away.

| watch in dark (eyes more dilated) | watch with lights on (smaller field of view) |

It took me a while to realize the significance of watching movies in the dark. Of course it increases immersion; as Norma Desmond said in "Sunset Blvd" (movie 1950):

This is my life. It always will be. There's nothing else. Just us and the cameras and all those wonderful people out there in the dark. All right, Mr. DeMille, I'm ready for my close-up.

With the screen filling the field of view and nothing much else to see in the theater, we find film very engrossing. But at last I realized that an important side-effect is that we watch WITH OUR EYES DILATED. We dilate our eyes unconsciously when we are looking at things we find interesting; it has been suggested that the converse also applies, that watching with dilated eyes causes us to be more interested.

TV of course is this little bright box in a bright room that doesn't fill our field of view or command as much of our attention. (Though we do create trance-like alpha brain-waves when watching it, no matter what's on, in contrast to the more active beta waves when reading or conversing. Google "Thomas Mulholland" for more info.)

| reflected light ("the silver screen") | transmitted light ("you are the screen") |

As for the significance of reflected vs. transmitted light, I do recall that in computer graphics making something "internally lit" gives it an other-wordly, almost religious luminance. And I find McLuhan's slogan "you are the screen" to be pretty evocative.

| rushes, dailies, pickups | videofeedback (enfolding, Klein worms) |

Filmmakers know of the time-delay in getting feedback from film. In most movie productions the crew gathers each evening to review the "rushes" or "dailies" which have been rushed through development and printing, and any mistakes not caught during the shooting are fixed with "pickups," re-shoots that may have to be done days or weeks later depending on other resources.

Video by contrast is all about immediate feedback. Videofeedback is an art form (there's no such thing as "filmfeedback") created by pointing the camera at the screen, and is now understood to provide clues as to the nature of complexity and chaos.

Paul Ryan's 1973 manifesto on radical video, "Birth & Death & Cybernation"

explores the "enfolding" of video into its environment, such as when you tape people, and them tape them watching the tape and reacting, and so on. He even created diagrams he called "Klein worms" to try and illustrate this process.

| tearing ticket triggers a hormonal response | TV is the hearth, or a guest in the home |

Of course, McLuhan's assertion -- that when the usher tears your ticket in a movie theater, it triggers a hormonal response -- may or may not be literally true, but it RINGS true. During most of my childhood I had vivid, memorable dreams only after seeing a movie in a theater.

TV doesn't swallow us the way movies do; we tend to relate to it as a fixture (fireplace, aquarium) or guest in our home. TV station WPIX-11 in New York City still shows a roaring fire with Christmas carols playing every holiday season.

| effects done with blue screen | effects done with green screen |

I'm not sure how much to make of it, but you can tell long-time film people from long-time TV people by the unconscious selection of "blue screen" (film) or "green screen" (TV) when talking about chromatic mattes.

The movies had it first with the Dunning-Pomeroy optical process in the 1920s; TV updated to an electronic "chroma-key."

| higher in class | lower in class |

This is always relative; in 19th terms actors rank with vagabonds and hobos as untouchables, and this persisted into the movie era, when prior to the superwealthy "United Artists" Chaplin, Pickford and Flynn, no actors were allowed to live in Beverly Hills. Also Nickelodeons were associated with disease, considered -- like music halls before them -- a place to pick up germs. (You'd think TV would be more hygenic by this line of reasoning.)

But by post-World-War-II, the TV networks were trying to break into the entertainment biz, almost entirely from New York City at first, and no self-respecting Hollywood movie actor would be caught dead acting on TV. Milton Berle, "Mr. Television" was considered shameless by the LA crowd. Only in the last generation has this old stigma faded; now stars can move freely from movies to TV, though film still does pay more.

TAKING STOCK OF FILM

A man who knew what movies are all about passed away this weekend. Samuel Z. Arkoff died of natural causes at the age of 83 in Burbank Sunday. One of the founders of American International Pictures in 1954, Arkoff knew that the teen population would gladly feed their cushy post-war allowances to the box office for the right fare.

AIP started with horror pictures like "The Beast with a Million Eyes" and "It Conquered the World" and quickly moved into rebel pictures like "Motorcycle Gang" and "Reform School Girl."

-- Fanboy Planet: In Memoriam: Samuel Z. Arkoff, 2001, by Jordan Rosa

( http://www.fanboyplanet.com/guest/rosa-arkoff.htm )

McLuhan predicted that every medium would remain invisible until it became obsolete, and then it would become a subject for preservation and nostalgia. This will happen with film as it disappears. There is a collection of 16 mm classroom films in an archive called "Cine16" and they will only be shown on old classroom projectors, not scanned into video.

One thing I will miss is "film stock jokes." Occasionally a filmmaker will use the quality of film stock as an aesthetic statement, usually humorously. George Lucas did this in "American Graffiti" (movie, 1973)

when he shot his masterpiece "coming of age" movie (which went on to become the #2 highest grossing movie to date) on the same cheap, cruddy color film stock used by American International Pictures (AIP, or "ape") for their cheesy youth exploitation movies -- and then had them distribute it as well!

He went even further when he produced the sequel "More American Graffiti" (movie, 1979) directed by Bill L. Norton.

The movie's complicated plot jumps back and fourth between four consecutive New Years Eves in the late 1960s, and each is shot on different film stock. The aspect ratio even changes, ranging from 1.85 to 1 for documentary-style Vietnam scenes, to 2.35 to 1 for San Francisco psychedelic shots in split-screen.

More recently the "mockumentary" of Hollywood independent director Morty Fineman, "The Independent" (movie 2000) directed by Stephen Kessler

used film stock jokes hilariously. Fineman Films seemed almost always to be on not only bad but inconsistent stock.

There is a scene in this hilarious show-biz spoof where Morty is being interviewed by two students with a tiny battery-powered Digital Video (DV) camera on a tripod. Morty seems confused. "You guys ready?" he asks doubtfully. "Got power? Lights?"

SOMETHING FUNNY'S GOING ON IN THE PROJECTION BOOTH

Star Wars Episode I -- The Phantom Menace was the first film to be projected digitally, and Episode II -- Attack of the Clones was the first film to be shot digitally in its entirety. This should have caused a paradigm shift but we're still sitting here waiting for the revolution to happen. Currently, there are only five of us who've worked with digital (now there's six) -- a very small group of people out of thousands who work in the film industry -- know that it's going to happen -- but how long is it going to drag on for before we get to the digital world?

-- George Lucas, giving the 2005 SIGGRAPH keynote speech

( http://features.cgsociety.org/story_custom.php?story_id=3065&page=2 )

Regarding Lucas' quest to produce an all-digital movie, from original shooting to final projection for an audience, some pundits have questioned whether such a picture would be eligible for an Oscar. Current academy rules require that a FILM be screened in Los Angeles during the year under consideration. Could this lead ultimately to the absurdity of a producer making a single optical print -- and the ridiculous expense that would entail -- in order to qualify? Or will the rules be changed by then?

My friend Wayne has been tracking the technology of digital projection for a while now. I got him a full-access pass to the afore-mentioned 2001 SIGGRAPH conference in return for which he had to write an article about the experience, and he wrote mostly about digital projection, which he learned about at an off-site session called "The Technology of Digital Cinema."

One of the things I learned from Wayne's article is that in theater- grade digital projectors

the data stays encrypted right into the projection head to prevent someone from tapping into the data stream, making a copy, and trying to play it back to the projection head later. I was even told that some digital projection system can modulate the projected image in a way that encodes a watermark that marks any attempt to make a pirate copy using a video camera or camcorder. Ah, the brave new world of digital rights management!

Many years ago my wife and I made friends with a projectionist at the Harvard Square Cinema in Cambridge, Massachusetts.

We brought him coffee and he let us in free, but most of the time instead of watching the movie we'd hang out in the booth talking to him. One of the things I vividly remember is how toxic the projectors were and how carefully he had to handle them. Running genuine old-fashioned carbon arc lamps, he had a large metal and rubber hood to vent poisonous metal fumes, ozone, nitrogen dioxide and carbon monoxide away from the air in the workspace. Clearly an advantage of the digital approach is a reduction in toxins.

Another thing I remember was being shown the cue marks that warned it was time to change the reel.

I'd never noticed them and in fact haven't ever noticed them since, either, but they're always there on a optical print.

In the digital era these invisible artifacts will quietly vanish.

Recently George Lucas invited a group of fellow directors, including Ron Howard, Bob Zemeckis, Steven Spielberg, Marty Scorsese and Oliver Stone, to compare film and digital versions of some scenes from the movie "Shrek." The catch was for the film he used a real, scratchy copy that had been in a mall theater for weeks.

They all agreed that the audience experience was much better with digital.

But the transition to digital projection is going more slowly than the studios would like. The main reason for this is economic. The already-strapped theater owners have to pay for them, and don't see much benefit. It's the studios that will save millions in film print costs. Disney for one has attacked this problem recently with 3D movies. In the article "Disney Goes Deep For 3D"

it is explained that Disney's plan is to add value for the theater owners by offering something to compete with people sitting at home watching DVDs on their big screen TVs: it released a 3D stereo version of its new computer-generated feature-length cartoon "Chicken Little" (2005) which could only play in digital theaters. (It doesn't hurt that studios are picking up $75,000 of the $85,000 price tag for each conversion.)

Digital projection has also allowed Regal Cinemas to create a chain-wide informercial program called "The 2wenty" which they show for 20 minutes before the movie. Some patrons think it's more annoying ads, other think it's less annoying than ads.

It does foretell the demise of the local ads on slides with music that we had for decades.

REZ

I used a low-rez mode to give it a high-tech look.

-- an album cover artist in the 1980s quoted in (now defunct) "Creative Computing" magazine

Thanks to the widespread use of computers, and more recently digital cameras, the word "pixel" is now in dictionary. The concept of "resolution" is widely understood. What is less understood is the impact of increased resolution.

In the tech-thriller "Interface" (novel, 1994) by Stephen Bury

a media consultant asks an engineer if he's ever seen himself on TV.

"Just incidentally."

"How did you think that you looked?"

"Not very good. Actually, I was kind of shocked by how strange I looked."

"Your eyes looked as of they were bulging out of your head, did they not?"

"Exactly. How did you know that?"

"The gamma curve of a video camera determines its response to light," Cy Ogle said. "If the curve were straight, then dim things would look dim and bright things bright, just as they do in reality, and as they do, more or less, on any decent film stock. But because the gamma curve is not a straight line, dim things tend to look muddy and black, while bright things tend to glare and overload; the only things that look halfway proper are in the middle. Now, you have dark eyes, and they are deeply set in your skull, so that they tend to be in shadow. By contrast, the whites of your eyes are intensely bright. If you know what I know, you would keep them fixed straight ahead in their sockets when you were on television, exposing as little of the white as possible. But because you are not versed in this subject, you swivel your eyes around as you look at different things, and when you do, the white part predominates and it jumps out of the screen because of the gamma curve; your eyes look like bulging white globes set in a muddy dark background."

"Is this the kind of thing that you teach to politicians?"

"Just a sample," Ogle said.

"Gee, it's really a shame that--- "

"That our political system revolves around such trivial matters. Aaron, please do not waste my time and yours by voicing the obvious."

"Sorry."

"That's how it is, and how it will be until high-definition television becomes the norm."

"Then what will happen?"

"All of the politicians currently in power will be voted out of office and we will have a completely new power structure. Because high-definition television has a flat gamma curve and higher resolution, and people who look good on today's television will look bad on HDTV and voters will respond accordingly. Their oversized pores will be visible, the red veins in their noses from drinking too much, the artificiality of their TV-friendly hairdos will make them all look, on HDTV, like country-and-western singers. A new generation of politicians will take over and they will all look like movie stars, because HDTV will be a great deal like film, and movie stars know how to look good on film."

What is still poorly understood by the masses is the concept of and dithering (trading spacial resolution for color). What is also seldom asked is "how many pixels are enough?"

I just did a thumbnail calculation. Sources on-line tell me the fovea, the high-density part of the retina, is about 0.22 square mm in area, and have a density of 200,000 cones per square mm, also that it represents about 7% of the retina. So there must be about 40,000 cones in the fovea, and if the whole retina were as sensitive, it would have about 570,000 cones. That represents our highest resolution over our entire field of view. Now I don't what size -- measured as a solid angle -- our field of view is, but I'd estimate it's around 1/3 of a full, omnidirectional spherical view, so if we triple the 570,000 we get 1,710,000 -- which is about where our digital cameras are now. That's the number of pixels we'd need to cover the inside of a sphere at a resolution good enough for us to stare at any spot with our fovea and give it enough pixels at its resolution.

Of course for a while more pixels are still better, because they provide natural anti-aliasing, but after about a 10x increase there's no point -- you're wasting pixels. So when we got to 3K x 4K in film scanners we were bumping up against approximate limits to the needs of pixels to achieve clarity.

PERSONS UNDER 18 NOT PERMITTED TO READ THE NEXT SECTION

Among the more interesting collections from this period are the photographs taken in New Orleans by E. J. Bellocq. These pictures showing the girls from the Storyville brothels in their rooms, relaxing in front of the camera, are an intriguing and valuable document of the era. They were discovered in the 1970s and printed by photographer Lee Friedlander and published, but very little is known about how or why they were taken.

-- "Nude photography, 1840-1920"

( http://photography.about.com/library/weekly/aa013100b.htm )

Many technology-watchers have noticed that erotic uses are often the earliest ones for new media. When Polaroid introduced their first low-cost instant camera, they called it "the Swinger."

Judging by the frequency of celebrity nudity leaked from cell-phone cameras (of Paris Hilton, Charlotte Church, Fred Durst, etc.), racy pictures are the "killer app" for these new gizmos.

A recent article on ZDNet, "As Goes Pornography, So Goes Technology"

talks about the porn industry weighing in on the DVD format wars.

The concept may seem odd, but history has proven the adult entertainment industry to be one of the key drivers of any new technology in home entertainment. Pornography customers have been some of the first to buy home video machines, DVD players and subscribe to high-speed Internet. One of the next big issues in which pornographers could play a deciding role is the future of high-definition DVDs.

Nine years ago Hollywood made a movie about the porn industry and it titillated America all the way to the bank: "Boogie Nights" (1997) directed by Paul Thomas Anderson.

The main story of the movie is moral decline among the porn crowd (what a shocker) but there is a strong subtext that deals with the decline in product quality in the shift from film to video in the early 1980s. In one scene director Jack Horner (played by Burt Reynolds), who swore he'd never switch from film to video, ends up being forced to attempt a "gonzo" style "cinema verite" experiment, cruising the streets of LA with a porn-star in a limo with no script and no real plan. He narrates:

...and we're going along, like I said, west on Sherman Way, and this is called On the Lookout -- that's the name of this show, on the lookout for a young stud who maybe will get in the back seat here and get it on with Rollergirl; and we're going to make film history... right here on videotape.

DIGITAL KILLED THE VIDEO STAR

"Video killed the radio star."

-- The Buggles, 1979 (first song played on MTV, 1 August 1981)

( http://www.amazon.com/exec/obidos/ASIN/B000001FVL/hip-20 )

As I work on this story I noticed that a lot seems to pivot on SIGGRAPH 2001 in L.A., just a month before 9/11. Maybe this was the twilight of a naive time when we thought that maybe the dot-com crash was going to be a short-lived phenomenon.

I have a memory of driving around downtown L.A. with my friends Wayne and Will playing a tape on my car stereo, singing along with Weird Al's "It's All About the Pentiums"

and with the Buggles' "Video Killed the Radio Star," only we were singing "Digital Killed the Video Star."

In the five years since then digital progress was slow for a while, but it's picking up speed again.

We keep being reminded that digital doesn't JUST mean the ability to make perfect Nth-generational copies. It also means easier repurposing. It means more mashups, more podcasting, more "vlogging," more ??? in the future using the available pieces of our culture. That's the POTENTIAL for digital.

One example of an emergent digital feature is "tagging." Our traditional way of sorting media has been to put it in hierarchical directories. Digital photos might be in a folder called Outdoor/Sky/Clouds -- but if there is also a folder called Cities/Detroit/RenaissanceCenter and you have a picture of the Renaissance Center with sky and clouds in the background, where do you put it? Tagging, which evolved at photo sharing sites like flickr,

allows users to attach keywords to media, and then search by keywords. It sounds so simple, but -- in a trusted community -- it is a powerful tool for collaborative analysis and re-use.

DRM AND GLOOM

They took the credit for your second symphony.

by machine and new technology,

now I understand the problems you can see.

-- The Buggles "Video Killed the Radio Star" (pop song, 1979)

It seems like everybody in Western Civilization except the MPAA knows that the VCR was good for the movie industry. The reason the Hollywood studios failed to out-lobby the video rental stores (which began as little mom and pops, not chains like Blockbuster and Hollywood) was that each one of those mom-and-pops had a congressman, all over America, while the studios were localized in N.Y. and L.A., and only had a few sympathetic congressmen.

Well, they've learned from that one. The new push for Digital Rights Management (DRM) both technically and legally is starting to be the big downer of digital.

The movie companies tried to stop VCRs and then video rentals at the outset. The music industry had the gall to release CDs with unencrypted, uncompressed digital audio data since 1982, and then go back after the fact over 20 years later and try to "put the toothpaste back into the tube," and try to criminalize the very thing I got the CDs for in the first place -- to make my own music "mixes." (Did they think I wanted to listen to a whole Michael Penn album? No, all I wanted was "Romeo in Black Jeans" -- uh, I mean "No Myth.")

In the short story anthology "Dangerous Visions" (1967) edited by Harlan Ellison,

Philip Jose Farmer had a novella called "Riders of the Purple Wage" in which a future artist made a 3D holographic sculpture of a bunch of Disney characters having an orgy. (I was reminded of the nearly- contemporaneous 1966 poster commissioned by radical journalist Paul Krassner.)

Of course, thanks to the Sonny Bono Act of 1998 the day when such an artwork will be legal has pushed another generation into the future.

What are creating is a generation with fair use rights stripped away. Innovative works like D.J. Dangermouse's "Grey Album" mashup

are relegated to the status of "hipcrime" (to borrow a word from John Brunner's 1968 sci-fi novel set in 2010.)

So far we consumers have lucked out on some occasions when the content providers wanted to cancel our rights, because the "tech" companies have been on the other side, defending us. But we can't count on that continuing. This is pointed out in a new book, "Darknet: Hollywood's War Against the Digital Generation" (2005) by J. D. Lasica.

The point of view of the book seems to be that of Henry Jenkins, director of MIT's Comparative Media Studies Program, whose ideas are referenced in the introduction:

The ways in which people appropriate mass media cover a broad spectrum, Jenkins points out, and he draws some sensible lines in our virtual sandbox. He believes the laws should be changed to draw a legal distinction between appropriation by amateurs and appropriation for commercial gain. He would allow the kinds of borrowing and creative remaking that takes some kind of celestial jukebox where music or excerpts from other media could be sampled. But he would prohibit the distribution of wholesale works that haven't been altered or remixed by the audience, like the file trading that takes place in the movie underground.

"I think people who care about the public's right to participate in media culture should speak up against forms of media distribution that amount to out-and-out piracy," he says. At the same time, Jenkins and others believe entertainment companies are only hurting themselves when they brand any unauthorized use of their works as piracy.

But another factor here is that flat-out piracy sometimes HELPS content providers. The idiotic system by which movies and TV shows are released in stages globally (which is why DVD region coding was such an issue) frustrates fans who pick up a buzz globally from the internet. The file-sharing network BitTorrent gave a boost to Joss Whendon's TV series "Firefly" (2002)

and helped build word-of-mouth for his follow-on movie, "Serenity" (2005).

In fact, if you look at how TV is funded today, the winners with a system like BitTorrent are the content producers and consumers, while the losers are the "middle-men," broadcasters, video stores, etc.

And by extrapolation the ideal medium for BitTorrent would be infomercials, and shows with heavy product placement, as was predicted in the 1998 movie "The Truman Show" directed by Peter Weir.

But instead of harnessing this new power, the major studios are doing crazy things. Four years ago Sony tried a CD copy protection scheme that it turned out could be defeated with a felt pen. I read about it on the CNN web site, among other places. What nobody but the bloggers pointed out was that the CNN news story was itself a violation of the Digital Millennium Copyright Act (DCMA), since it revealed how to thwart copy protection. Nobody in government wanted to prosecute CNN and nobody in the majors wanted them to either, because it would have vividly illustrated how draconian and anti-first-amendment the law is.

Perhaps the most positive development in the new format war between Blu-Ray and HD-DVD is that the public's resistance to DRM may be a major deciding factor, as well as preventing further insanity such as the idea that you will need a new HD-TV with decryption at the screen (like those digital projectors with decryption at the projection head) to watch a hi-def DVD.

THAT THING YOU DO

You do not need the so-called traditional channels of distribution to get your work to an audience, and you'll probably be happier and more successful by not going through those channels.

-- Will Wheaton, teen star turned geek blogger

Of course, when pressed, the majors say "we're doing it for the artists." And some of the artists, like Metallica, are behind them. But quite a few more are not. And when the RIAA sues a file sharer and wins a settlement, how much does the artist get again? If I'm not mistaken it's NADA.

My old pal Thaddeus

who is in the small-time music biz was kind enough to point me at an illuminating link, to an article called "Some of Your Friends Are Already This F***ed"

that details how the artist gets the shaft in the music business. Another view of this process is the delightful little movie "That Thing You Do" (1996) directed by Tom Hanks,

which was shot in Orange, CA while I lived there!

And Thaddeus also pointed me at the real life adventures of pop singer/songwriter Janis Ian, who is using the power of the internet to offer free stuff to promote pay stuff.

People frequently point to the rock band U2 as an example of a band that "gets it" about digital and does right by their fans.

A recent New York Times article goes into some detail about the lessons of U2.

WHAT WOULD JERRY GARCIA DO?

The era of moviegoing as a mass audience ritual is slowly but inexorably drawing to a close, eroded by many of the same forces that have eviscerated the music industry, decimated network TV and, yes, are clobbering the newspaper business. Put simply, an explosion of new technology -- the Internet, DVDs, video games, downloading, cellphones and iPods -- now offers more compelling diversion than 90% of the movies in theaters, the exceptions being "Harry Potter"-style must-see events or the occasional youth-oriented comedy or thriller.

-- LA Times, 22 Nov. 2005

And of course the Grateful Dead is the penultimate example of a group that did -- and sometimes still does -- give their fans freedom, and get love in return.

As I said above, the winners in the digital realm will be the producers and consumers of content, and the losers will be middle men -- broadcasters, theater owners, video stores.

So what advice would I offer to those stuck in the middle?

The contradictory trends are cocooning and spectacle. People will stay in and watch their hi-def DVDs (or just stream bits off their hard drive, like TiVo) on their hi-def home theater with sub-woofer, and they will only be drawn out by spectacular events, like 3D movies and autograph signings.

Note that both these contradictory trends push things towards being more interactive, more kinesthetic, like the "Feelies" in "Brave New World" (novel, 1932) by Aldous Huxley.

So to the broadcasters and networks I say: do more live stuff. that's what you do best. Cross reality TV with blogging and MMOGs and podcasts and instant messaging, and pump it out as NEW STUFF.

To the theaters I say: more gimmicks, more 3D, other F/X, celebrity appearances, live events. Make it more of a party. Then maybe I'll bring my date.

To the video stores I say: offer content you can't get anywhere else. Become producers (in the direct-to-video model), and make your stores into movie buff hangouts -- even as you improve your on-line presence -- and appeal also to local moviemakers, forming little auteur clubs.

AN ARMY OF LOVERS

Everybody who comes to Hollywood has a dream. What's your dream?

-- clown on Hollywood Blvd. in "Pretty Woman" (movie 1990)

directed by Garry Marshall

Remember that the word "amateur" comes from the Latin AMATOR: lover, devoted friend, devotee, enthusiastic pursuer of an objective.

What springs to my mind are the talented midwesterners in "Best Brain Productions" who produced "Mystery Science 3000" (or "MST3K" as its fans called it).

I think about all the best brains around the world and what a great time it is to be making movies as an "indy."

It was -- guess when -- in the summer of 2001 that my friend Wayne started dragging me up to Hollywood for meetings of the Los Angeles Final Cut Pro Users Group (LAFCPUG).

Final Cut Pro is a digital video editing program that runs on Macs, that is Hi-Def ready and pro quality. (The amateur equivalent is iMovie.) As Final CUt Pro goes, so goes Digital Video (DV).

The date of the first meeting I went to was 6/27/01. I still have my notes. It was held at the Los Angeles Film School.

I picked up some of their literature, and they were offering "digital boot camps" that taught shooting and editing in the new medium.

From my notes:

Seven months later the big news from the Sundance Film Festival was that DV was everywhere. In an article from CNN, "Winick, Women and DV Top Sundance -- Digital Video Gaining In Serious Attention" January 20, 2002 by Anne Hubbell, Special to CNN

the story is told of "Changing Technology" at Sundance:

One of the most important winners of the evening was a technology -- digital video, or DV. Nearly all the prizewinners utilized the medium.

DV appears to be here to stay as a viable, less expensive alternative to film. "It works really well for intimate scenes," said Miller about its use in "Personal Velocity." "DV doesn't work for stories told on a grand scale, but for personal, independent work it makes sense."

At a more recent LAFCPUG meeting, I learned that the Panasonic HVX200 is a hot new HiDef DV camera for only about $6,000.

THE MAJORS

It is only in the large community, where the Lords of Things as They Are protect themselves from hunger by wealth, from public opinion by privacy and anonymity, from private criticism by the laws of libel and the possession of the means of communication, that ruthlessness can reach its most sublime levels. Of all these anti-homeostatic factors in society, the control of the means of communication is the most effective and most important.

-- Norbert Wiener

Throughout this 'Zine I've talked about "the majors" like everybody knows what I mean. But telling the players can be hard without a program. For years there have been seven Major Studios (also involved with broadcast TV, cable, movies, music, book publishing and Internet) but just last month DreamWorks was bought by Paramount and we were down to six.

So to help myself sort it all out I made an HTML table (I think in HTML these days) and thought I'd share it with you-all.

A couple of things I want to say about this chart:

RIGHT BEFORE YOUR EYES

In the great media markets of Hollywood and New York the trend is toward consolidation; every year there seem to be fewer and fewer players controlling ever larger and more complex entities. Soon the whole world of entertainment may belong to Michael Eisner, Rupert Murdoch, Ted Turner, and one or two others. What is forgotten is that all these major players are aging men; what they have so competitively gathered together may, in only a few years, scatter again, break back into fragments. The entities they created are for a day, not forever.

-- Larry McMurtry "Roads: Driving America's Great Highways" (essays, 2000)

I hadn't even finished the chart before it was obsolete. I thought Sumner Redstone would never retire, so when he said he was splitting CBS out of Viacom and giving the two pieces to Les Moonves and Tom Freston to run, I didn't believe it. I thought he'd change his mind. (He still could, he still controls 71 percent of the voting stock of both companies and is the chairman of both companies.) But in January the two separate companies began trading as two stocks.

Then AOL Time Warner announced it was teaming with Viacom to create a new network called CW, and they would be closing down UPN and WB, taking some of the shows to the new network.

(And there was one more surprise... more on that later.)

In studying the evolution of the majors and their relationship to Digital Hollywood, I find nothing more instructive than to look at the trajectories of Lucas and Disney...

DISNEY DIPS...

The 2D [cel animation] business is coming to an end, just like black and white came to an end.

-- Disney CEO Michael Eisner, Spring 2005 at the Sanford Bernstein & Co. investor's conference

In C3M Volume 3 Number 8, Sep. 2004, "All I Know About Operations Research I Learned from the Walt Disney Company"

I wrote:

The first biography of Walt Disney I ever read, "The Disney Version: The Life, Times, Art and Commerce of Walt Disney" (1968) by Richard Schickel,

explained something I really already understood, that the entire economic engine of the Walt Disney empire was powered by the feature length cartoons. They originated the lovable characters that created demand for the TV shows, plush toys and theme parks. Without them, the whole enterprise fails.

This is why it seems so ironic to me that Disney CEO Michael Eisner has announced the end of 2D hand-drawn cartoons at Disney. Perhaps this is a repetition of the cycle in which the last management team was booted out at Disney. Company yes-man Card Walker and Disney son-in-law Ron Miller announced the effective end of 2D hand-drawn animation in the early 1980s, and Roy Disney and Stanley Gold forced them out and brought in Eisner in 1984.

My favorite source of information on the Walt Disney Company used to be Roy Disney's stockholder revolt site, SaveDisney.com, which he brought down after Bob Iger took over and offered to settle the stockholder's suit brought by Roy and investor Sid Bass.

I have archived a couple of its pages: a links page and a timeline page:

But one of their most fascinating offerings was a series of essays by a former Disney financial analyst writing under the pseudonym "Merlin Jones" who chronicled the decline and fall of animation under Eisner.

In early 2005 I began wisecracking about a fictional press release from Disney top management which said they were going to outsource animation, movies, TV, cable, theme parks, merchandising and retail, and "concentrate our resources on our core competency: maximizing executive compensation."

Shortly before SaveDisney.com disappeared into to the ethernet, the book "DisneyWar" (2005) by James B. Stewart came out.

There weren't very many surprises for me personally in it, because I've been following the story all along, but Stewart has done a wonderful job of putting all of the pieces together.

(If you're interested in the tale of the rise and fall of Eisner and you don't have time for all 550 pages, just read the six pages of the Epilogue.)

The big surprise for me was the news that when Michael Eisner and Frank Wells took over the company in 1984, Eisner wanted to cut animation loose right away, and Wells was ready to let him. It was Roy Disney who said he wanted to keep it and run it himself. Later he was joined by Jeffrey Katzenberg, and the two of them gave us masterpieces like "The Little Mermaid" (animated feature, 1989) directed by Ron Clements and John Musker,

"Aladdin" (animated feature, 1992) directed by Ron Clements and John Musker,

and "The Lion King" (animated feature, 1994) directed by Roger Allers and Rob Minkoff.

By now these cartoons were making hundreds of millions of dollars, and upper management began to micromanage the process -- it had become "too important" to not fix, even though it wasn't broken. The biggest disaster was firing everyone in the story department and replacing the "story-board" system created by Walt with scripts approved by budgeting bean counters (!).

Of course the whole thing fell off a cliff. Last time I went to Disney's California Adventure in Anaheim I visited the Animation building in the Hollywood land, and there was a beautiful timeline of Disney animation, stretching from 1901 -- when Walt was born -- to 2001, the year "Atlantis: the Lost Empire" was released, directed by Gary Trousdale and Kirk Wise.

(Of course it was an expensive failure.)

Tacked onto the end of the timeline was a sign saying "THE TRADITION CONTINUES" and some drawings from "Home on the Range" (animated feature, 2004) directed by Will Finn and John Sanford,

which was the last hand-drawn feature film Disney made before booting all the animators.

An emotional telling of this collapse is in the independent cartoon "Dream On Silly Dreamer" (2005) directed by Dan Lund.

(It is not yet available on DVD, but has been screen several times on both coasts to appreciative audiences that have included former Disney animators. Watch for it.)

Another revelation in "DisneyWar" was that shortly before Eisner sent all of the animators packing, most of the animators signed a petition calling for Eisner's ouster. Hmmm. I'm reminded of a scene in John Cleese and Connie Booth's hilarious BBC TV show "Fawlty Towers" (1975) directed by John Howard Davies and Bob Spiers,

in which the owner of a hotel drags his guests out of their rooms in the middle of the night because one of them complained about something, saying "no one else has any complaints, do you?" When they all do, he decides "the guests have to go" and throws them all out. (The scene ends somewhat happily when his wife arrives and reverses the decision. Will Bob Eiger bring back Disney's animators?)

Rivaling Disney Corp.'s botching of 2D hand-drawn animation was its botching of 3D computer animation. Symbolic of this rough ride is the fate of Buena Vista Visual Effects. Disney's own web site, in the studio history area,

has a thoroughly whitewashed version:

In the 1950s, as live-action films increasingly played a major role in the success of the studio, so did the inclusion of visual effects. Such memorable films as 20,000 Leagues Under the Sea and Darby O'Gill and the Little People began a tradition of combining complex optical effects with miniatures and matte paintings to create rich fantasy worlds on the screen. Throughout the 1960s and 1970s, the Process Lab, renamed Photo Effects and then Visual Effects, was home to the distinguished artists and technicians responsible for the effects seen in Mary Poppins, The Absent Minded Professor, Blackbeard's Ghost, Bedknobs and Broomsticks, Pete's Dragon, and Tron.

During the 1980s, the unit was named Buena Vista Visual Effects Group and expanded its facilities into the Camera building to include a motion-control stage. In 1990, the unit became Buena Vista Visual Effects (BVVE) and shifted rapidly to digital- imaging technologies. Rooms within the Camera building, which formerly housed multi-plane cameras used to shoot animation, were filled with computer equipment. BVVE transitioned to Buena Vista Imaging in 1996.

Today, Buena Vista Imaging occupies the Camera building, providing a full range of photo-optical and digital-imaging services, which include a black and white lab, digital workstations, film recorders and scanners, optical printers, and title graphics.

The real story of the computerizing of Disney has a lot more intrigue. In part one of this three-part 'Zine,

http://www.well.com/~abs/Cyb/4.669211660910299067185320382047/c3m_0407.txt

I told the story of how as early as 1986 the "The Disney Late Night Animation Group" volunteered their own time to make an unauthorized test film called "Oilspot and Lipstick" directed by Michael Cedeno.

What is important to note is that Disney management did NOT see the future at that point, thinking that computer animation was "just a toy" and would never be up the quality of hand-drawn animation, let alone live action photography.

Of course the guy who did "get it" was George Lucas (more on him in a minute), who founded the computer graphics lab that became Pixar when he sold it to Steve Jobs.

Playing catch-up, one of the few visionaries at Disney, Stan Kinsey, worked with Roy Disney to license Pixar technology from Ed Catmull. What this ended up evolving into was called the Computerized Animation Production System (CAPS), an automated ink-and-paint system.

In 1989 Disney used CAPS to create the final scenes in "The Little Mermaid" and released it without telling anybody. They wanted to see if any critics, competitors or the public noticed. Nobody did.

The company proceeded with CAPS, and also began to work to create its own internal CGI unit for live action film effects, which became Buena Vista Visual Effects (BVVE). As was widely reported (and mentioned by me in a previous 'Zine) Disney gave up on BVVE in 1996 and decided to import some F/X talent by buying DreamQuest Images. In 1999 the company decided to consolidate its holdings by folding DreamQuest into the feature F/X and CGI cartoon groups, creating a combined unit called "The Secret Lab." Two years later they abolished the Secret Lab and laid most of the people off. A lot of us Disney watchers thought "Huh!??!"

For a few years I tried to figure out what went wrong. Nothing made much sense. One of the problems was that the lab's first movie, "Dinosaur" (computer animated feature, 2000) directed by Eric Leighton and Ralph Zondag,

was a flop, with stunning effects but major story problems, and another problem was that the lab's last (unfinished) movie was "Wild Life" which was turning out to be a homoerotic exploration before Roy got wind and cancelled it. (It may in fact have resulted in a delightful movie, but for obvious reasons it would be very difficult for for Disney to release it.)

But these were all story issues. Management was supposedly micromanaging the story process in the hand-drawn movies, and ignoring it in the CGI ones. The common factor was that when things went badly they were blaming and firing the artists.

One of the Disney fan boards, MousePlanet,

passed along a parody press release by a disgruntled ex-labber that addressed the destruction of the Secret Lab by putting words into the mouth of President of Feature Animation Thomas Schumacher:

"When we merged Dream Quest Images and Disney's computer animation operation, it represented a tremendous pooling of talent and resources. Both groups were involved in creating spectacular digital imagery, and the formation of The Secret Lab brought together a group of visual effects experts that were tops in their field. Disney built a first-class digital animation studio and pushed the boundaries of digital filmmaking," Schumacher said. "But it didn't really work out, so we canned them all."

Finally, I got an explanation from my friend Fran Z., who survived the Secret Lab apocalypse, but then was laid off herself in 1993. She said that DreamQuest was originally in low-rent facilities in the town of Simi Valley, about 30 miles west of Burbank, way out in the west end of the San Fernando Valley, which has lower commercial real-estate costs. Feature effects was in temporary facilities "off the lot" in a modest building in Burbank. Disney management moved both groups into a brand new, expensive building "on the lot," with state-of-the-art recreational and health club facilities, as well as expensive new computers, state-of-the-art large monitors, and even video games. Then some bean counter noticed that it cost Disney more to use its in-house facilities than to outsource CGI work to little shops with cheap facilities.

Of course the one CGI facility Disney couldn't botch was PIXAR; Disney micro-managers couldn't sabotage PIXAR's movies and they couldn't fire anybody there either. So Eisner in his insecure jealousy drove them off. Then he created his own little CGI group to make cheesy sequels of PIXAR movies, to bug them. This new group, hastily thrown together from people mostly hired away from DreamWorks animation, makers of "Shrek" (computer animated feature, 2001), directed by Andrew Adamson and Vicky Jenson,

was nicknamed "Pix-aren't"

but its official name was "Circle 7" after the street they were on, part of the ABC channel 7 campus owned by Disney.

Of course, as we'll see shortly, life is not good for the Circle 7 group. In fact, the fates have never been kind to anyone in CGI at Disney, as far as I can tell, and there is no management or technical continuity in the whole, sad story. This is especially problematic with computer work, where programmers and artists need to build and maintain a toolbox of software tools to work their craft.

...WHILE LUCAS LEAPS

Supreme Leader: You think me beautiful?

Captain EO: Very beautiful within, but without a key to unlock it... and that is my gift to you.

-- "Captain Eo" (theme park ride, 1986) directed by

Francis Coppola, produced by George Lucas

The most popular get-out-of-Hollywood story remains that of George Lucas, who moved his empire from Hollywood to Marin County -- a new facility he called "Skywalker Ranch" after the success Luke Skywalker made him very wealthy. The story is told in the biography "Skywalking: The Life and Films of George Lucas" (1984) by Dale Pollock.

There is so much to say about Lucas. Five lines of inquiry immediately leap to mind:

THE DIGITAL CAMERA

Digital technology has always been a major element of George Lucas' creative process. Twenty years ago, he pioneered SoundDroid and EditDroid -- the first computerized non-linear sound and picture editing systems. These tools helped revolutionize the editing field, putting a single frame at a sound or picture editor's fingertips, rather than buried inside of thousands of feet of celluloid.

The technology is now available to allow the digital world to become part of the shooting process itself. In 1996, Rick McCallum obtained a commitment from Sony to develop a 24 frame high definition progressive scan camera, as well as the key building blocks of a 24 frame post production system. Panavision then came aboard to develop a revolutionary new lens that could accommodate digital cinematography.

When cameras rolled in June 2000, "Star Wars: Episode II Attack of the Clones" became the first major motion picture created by using the high-definition, twenty-four frames per second, digital video camera and videotape rather than film. "We received the final version of the camera one week before our first day of principal photography," McCallum remembers. "We started shooting without any film backup whatsoever. We just went for it. We shot in deserts -- where the temperatures were over 125 degrees for weeks -- we shot in torrential rain, and in five different countries throughout the world. All without a single problem."

Attack of the Clones director of photography David Tattersall notes that Lucas' interest in the potential of digital photography dates back even further than 1996 -- to their early collaborations on "The Young Indiana Jones Chronicles" and "Radioland Murders." Lucas and Tattersall shot some digital tests on their next effort, "The Phantom Menace," but the technology was not quite ready to be utilized for an entire feature film.

On "Attack of the Clones," Lucas and Tattersall finally had the opportunity to discover the numerous technical and practical advantages of digital cinematography. "With digital, we can time the movie as we're shooting it," notes Tattersall. "Also, there's never any doubt about whether or not you see something in the background. With film, when you review your shot you're looking at a pretty poor quality videotape, and it's sometimes difficult to see the subtleties. But with high definition video, there's absolutely no doubt about what the lens has captured. The playback on the HD monitor is crystal clear. You can see everything you want to see -- or shouldn't be seeing."

The use of digital cameras was a time-saver on numerous aspects of production. No longer hampered with the delays of film processing, scenes could be immediately modified and edited as soon as Lucas yelled, "Cut!" further blurring the lines between production and post-production. The digital format allowed unprecedented flexibility in the construction of shots, with editor Ben Burtt and Lucas having the freedom to change or move sets, people, and lighting within the image itself. In addition, visual effects shots no longer had to be scanned into a computer, manipulated, and then scanned back to film.

With this new high-definition camera, Lucas is mapping out an exciting digital future for the cinema. But he sees this as an evolutionary rather than revolutionary process. "The advance of cinema into the digital world is a normal transition," Lucas states. "Just as we went from silent films to sound pictures, from black and white to color films, digital cameras are an addition to the tools we use to create movies."

The camera's impact is felt even in the movie theater, as the digital format allows the film' images to retain their integrity, not just opening night, but throughout the entire run of the picture. There will be no scratch marks, dust or wear and tear on "Attack of the Clones" digital prints through their life in the cinemas.

Some have questioned whether the cheap moviemaking and effects revolutions might make people like Lucas obsolete.

A journalist asked him:

"Two guys in a garage" is the basic building block of Silicon Valley. Can you imagine a time when a culturally significant movie can be made by just two guys in a garage?

To which he replied:

Definitely. Definitely. That's what we did in film school. So far nothing culturally significant has been made in a film school, but it's all relative, of course. On my first film, THX-1138, there was a very, very small crew. I mean, it wasn't two people, but it was less than 20, so it was a very small group. It was made with very, very little money. Now we have things like Hi-8 and Photoshop. Some of the special effects that we redid for Star Wars were done on a Macintosh, on a laptop, in a couple of hours. And they look exactly the same because they're intercut with the old shots. Obviously, they were done by somebody who's brilliant. But at the same time, the mechanical technical wherewithal to do it exists today. I could have very easily shot the Young Indy TV series on Hi-8. Young Indy looks like big, giant movies. I mean, the quality is just as good; no one would have known the difference. So you can get a Hi-8 camera for a few thousand bucks, more for the software and the computer; for less than $10,000 you have a movie studio. There's nothing to stop you from doing something provocative and significant in that medium.

Then the reporter sprang the $64,000 question:

So why aren't there more guys in garages doing great movies?

Lucas nailed it:

It's hard. Why aren't there more people writing great American novels? I mean, that's what it comes down to. All the stuff is there. Everybody in this country who has graduated from high school, in theory, should be able to write the great American novel.

As an intriguing post-script to all this Lucasology, the "Hollywood Reporter" interviewed him recently

and asked him about the future of movie media.

THR: What do you think will replace DVD?

Lucas: Pay-per-view.

THR: Something that streams in, not prerecorded media?

Lucas: It's the way kids do it today. It's how you do it on your iPod: They just download it. You pay 99 cents for music, and movies will be like two bucks. That will definitely change the economics of the business because (studios) are losing money now.

THIS IS THE BIG CITY

The place where I come from is a small town

They think so small, they use small words

But not me, I'm smarter than that,

I worked it out

I'll be stretching my mouth to let those big words come right out

I've had enough, I'm getting out

to the city, the big big city

I'll be a big noise with all the big boys, so much stuff I will own

-- "Big Time" (pop song, 1986) by Peter Gabriel

When I moved to L.A. County that song was in heavy rotation on MTV. (When I moved back to San Diego 12 years later it was Sprung Monkey's "Get 'Em Outta Here" about "Sweet Home San Diego.")

Some demographers have noted a trend in the late 1990s of people "moving home" from big cities. There was even a TV show about it, "Ed" (2000) created by Jon Beckerman and Rob Burnett,

concerning a New York City lawyer who moves back to his home town of Stuckeyville after some setbacks in career and romance.

My own move from the L.A. basin back to San Diego was motivated by:

But I think there's something much bigger going on here than some retrenchment by some Boomers who are questioning the middle class tradition of alienation through upward mobility. This may be the reversal of an urbanizing trend that goes back to the invention of agriculture, when suddenly there were a bunch of people who weren't needed (as much as in a hunter-gatherer society) to make enough food for everyone. Packing these folks into cities made it easier to distribute food, collect garbage, provide infrastructure, form guilds and pass on technologies, and even to collect taxes.

I have been known to define a CITY as "a human habitat usually located downhill from a natural water supply and uphill from a natural sewer." That will likely be true for a very long time, but the economic basis for cities has shifted radically in favor of suburbs and small towns since World War II. Marshall McLuhan said: "The city no longer exists except as a cultural ghost for tourists," and I think by this he meant nobody needs to go downtown anymore -- they finally opened branch offices of even the downtown courthouses in the suburbs.

I used to love the TV show "The 21st Century" (1967-1970) with Walter Cronkite,

which predicted what life in the 21st century would be like. In the episode on the house of the future -- which I saw as a teenager, and watched again a few years ago at the Museum of Television and Radio in Beverly Hills, California

(there's also one in New York City) -- we were told that in future we could live some place scenic like the Rocky Mountains, and use computers and satellite communications to work from home. A few decades later when Disney's Imagineers were looking for ideas for the "New Tomorrowland" which opened at Disneyland in 1998, they seized upon "The Montana Scenario" in which the place people retreated to from the cities was Montana. The idea got watered down, but vestiges of it remain in the outcropping of copper-colored rocks gracing the entrance to Tomorrowland from the central Plaza.

One way this trend, if true, will manifest is in the slow dissolving of New York and Hollywood as entertainment capitals. There will be a "Walk of Fame" with stars on the sidewalk in every town in America -- if not the world.

BACK TO HOLLYWOOD

Americans are considered crazy anywhere in the world.

They will usually concede a basis for the accusation but point to California as the focus of the infection. Californians stoutly maintain that their bad reputation is derived solely from the acts of the inhabitants of Los Angeles County. Angelenos will, when pressed, admit the charge but explain hastily, "It's Hollywood. It's not our fault -- we didn't ask for it; Hollywood just grew."

The people in Hollywood don't care; they glory in it. If you are interested, they will drive you up Laurel Canyon "where we keep the violent cases." The Canyonites -- the brown-legged women, the trunks-clad men constantly busy building and rebuilding their slap-happy unfinished houses -- regard with faint contempt the dull creatures who live down in the flats, and treasure in their hearts the secret knowledge that they, and only they, know how to live.

Lookout Mountain Avenue is the name of a side canyon which twists up from Laurel Canyon. The other Canyonites don't like to have it mentioned; after all, one must draw the line somewhere!

High up on Lookout Mountain at number 8775, across the street from the Hermit -- the original Hermit of Hollywood -- lived Quintus Teal, graduate architect.

-- Robert Heinlein "And He Built a Crooked House --"(short story, 1941)

in "6xH: Six Stories" (short story collection, 1959)

My last visit to Hollywood was right after Christmas, 2005, to see the tourist ghosts. We had a few days vacation as a family, and recently our daughter has expressed an interest in becoming a moviemaker. (She's already made 2 movies with Barbie dolls, collaborating with a friend, using my DV camera and her own iBook laptop to edit video with Apple's iMovie software). So we designed a Hollywood vacation for a budding director. On the first day we saw:

Production was shut down for the holiday week but we got to see the sets for "The Tonight Show" and "Entertainment Tonight." Our guide told us they used to point out the old analog cameras and say they cost as much as a car, but she wasn't sure what the new digital cameras cost. I looked at the name on one, "Digital Unicam Ikegami," and wrote it down; later I Googled until I found:

IKEGAMI HL-57 3CCD Digital Unicam w/lens, VTR NR $5,000

The barn where Cecil B. DeMille got his start has moved several times, but now it is prominent on Highland across from the Hollywood Bowl, and the home of the "Hollywood Heritage Museum."

I think it's cool that they sometimes show the "first Hollywood movie," DeMille's "The Squaw Man" (silent movie, 1914) -- which was made in this barn -- in this barn.

We also told our daughter the story of how DeMille had to share the barn; the other half was used to board a horse. Sometimes when the horse's owner hosed out the stall the horse manure would come under the wall onto DeMille's side. He began wearing his old cavalry boots and pants that tucked into them (jodhpurs) to work on his movie, thereby creating the archetype of the director in cavalry boots and jodhpurs.

Next we visited the new mall at Hollywood and Highland that has replicas of the old Babylonian elephants that D.W. Griffith had built for his epic "Intolerance: Love's Struggle Through the Ages" (silent movie, 1916).

The mall is built right into the old Mann's Chinese Theater, as well as the new Kodak Theater, where the Oscars are now held. (I wanted to take the tour but I was outvoted.)

We had lunch at the food park, did some incidental shopping, and then walked next door to...

But they also have original sets from TV shows "Cheers," "the X Files," and "Star Trek: The Next Generation."

in honor of it being mentioned in the movie "Miracle Mile" (1988) directed by Steve De Jarnatt.

On the second day we saw:

that said to see the HOLLYWOOD sign from the Hollywood Reservoir:

Take US 101 to Barham Blvd exit. Follow Barham to Lake Hollywood Drive. Turn right and follow Lake Hollywood Drive to the top of hill, continuing around the sharp right curve and making a sharp left onto Montlake Drive. Turn left at Tahoe and right on Canyon Lake and go up the hill to the dog park on the left.

We ended up spotting the sign while driving around the reservoir and stopping at the corner of Montlake and Tahoe to hike back to the view spot we noticed (where there is no parking). To see where I mean, bring up Google Maps

and search for "Montlake Drive & Tahoe Drive, Los Angeles, CA"

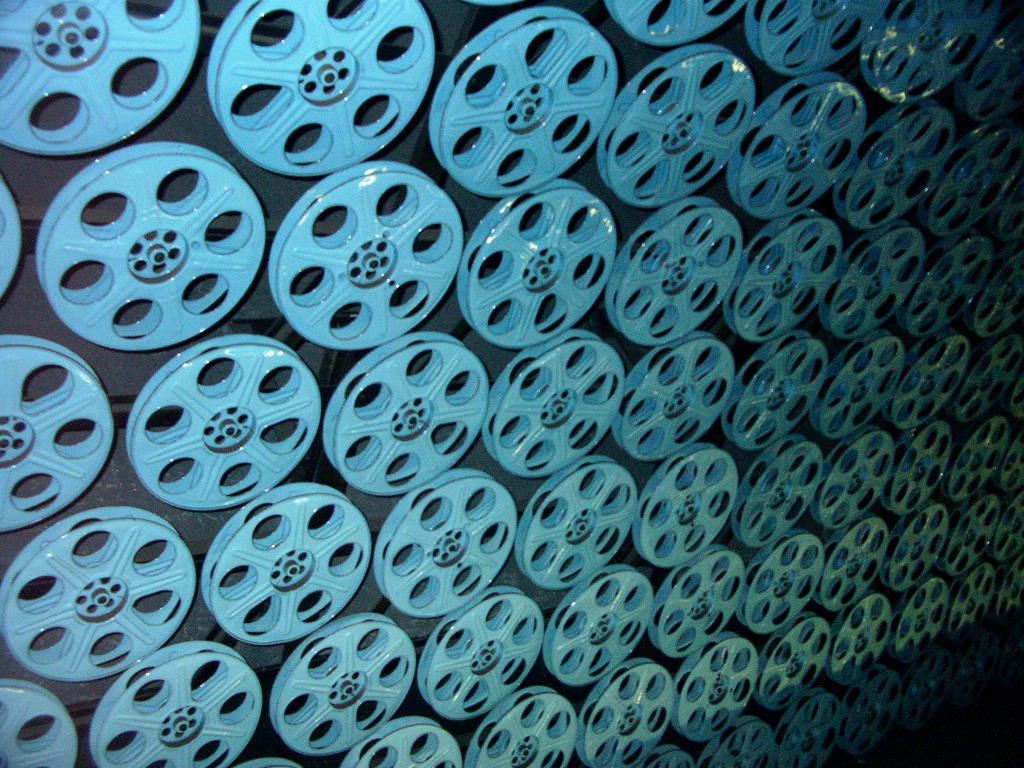

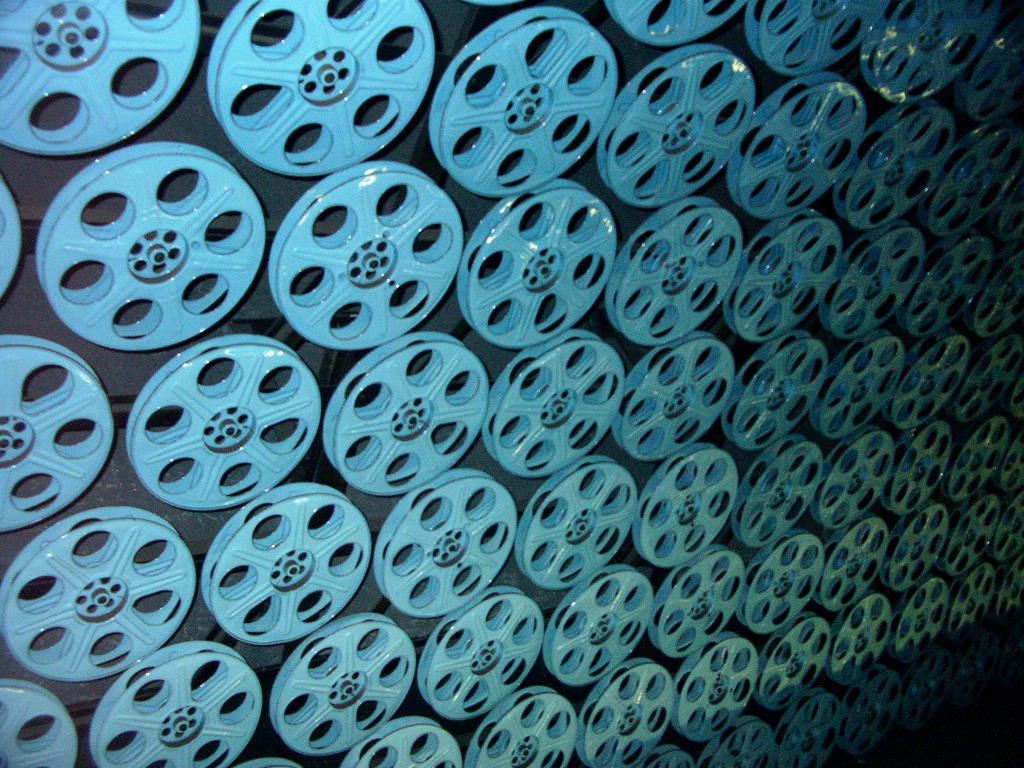

was a wonderful interior of the Hollywood and Vine Station

using projectors and film reels as decor. I took a few pictures of my own.

I recalled that each of these projectors would've cost about $30,000 when new. The fact that they could be left unguarded in a subway station emphasized how obsolete they had become.

(I think the statue of Bullwinkle the Moose from that corner is gone too...) But someone has built an iconic replica called Schwab's restaurant as part of a new deconstructionist multi-use complex at Sunset and Vine.

The food and decor were both very nice.

A Johnny Walker whiskey billboard showed a film clap-board for

Scene 1, Take 135.

A Johnny Walker whiskey billboard showed a film clap-board for

Scene 1, Take 135.

I wondered what the message was: You too can cost the production

money by showing up drunk?

I thought this Sprint billboard was so L.A. -- I could barely

stand it.

I wondered what the message was: You too can cost the production

money by showing up drunk?